Commentary: The problem with pausing data centers

Published in Op Eds

Congress is now being asked to pause data center construction. In March, Bernie Sanders and Alexandria Ocasio-Cortez announced a bill to halt the buildout of the infrastructure that makes advanced AI possible. The framing is familiar: the pace is too fast, the risks too great, the window to act too narrow. The only path forward is to preserve the status quo.

But this policy would do far more than ban new infrastructure. Pausing data centers is pausing AI. Computing is not incidental to AI development but is the foundation. Constraining it doesn’t buy time for safety. It just rations progress through politics, and hands that rationing power to whoever controls the permitting process. This is not a thoughtful safety-first agenda. Instead, it is a naked effort to pause AI altogether.

This is not the first time we’ve seen calls for blunt intervention where nuance is required. Almost three years to the day before this latest announcement, a group of just over 31,000 technologists, academics, and public intellectuals signed an open letter demanding that AI labs stop training systems more powerful than GPT-4 for at least six months.

The demand was urgent: governance and safety research were falling dangerously behind, and the window to catch up was closing fast. We are now at the end of the sixth six-month cycle since that letter went out. There was no pause. Things sped up. Nothing fundamentally broke.

Nothing about that panicked call for a pause was tailored to the complexities of AI development and diffusion. We were told that continuing to scale advanced AI without a coordinated halt risked catastrophic outcomes: loss of control over systems, destabilization of institutions, large-scale economic shocks, even existential risk. This wasn’t a distant prediction. They claimed the moment was already here, and continuing past GPT-4 without interruption was reckless.

But GPT-4 became GPT-4o, then o3, and now GPT-5. Significant progress was also made on Grok, Gemini, Claude, Llama, Mistral, and dozens of others. Global investment in AI is predicted to exceed a half trillion dollars in 2026. The pause advocates were wrong—but the more important question is why.

The pause argument fundamentally misunderstands how technological progress works. Progress is not a controlled industrial process that can be halted while governance catches up. The incremental, iterative approach to releasing technology is how we discover constraints, surface real problems, develop solutions, and build the institutional knowledge that leads to reason-based, responsive governance. Stopping AI three years ago would not have made AI safer. It would likely have slowed the research that makes more reliable and trustworthy AI possible. Case in point, ChatGPT-5 hallucinates 80 percent less frequently than 03.

There is a second, more uncomfortable fact. Not only did we opt to accelerate, but over the past three years no one—including the signatories themselves—proposed a feasible governance regime the pause was supposed to buy time for. That silence is damning. Their entire argument rested on a two-part claim: unrestricted development plus no governance architecture equals catastrophe. We got both conditions. The catastrophe never came.

AI systems built during those six cycles turned out to be legible, testable, and constrained by the institutions deploying them. They did not seize control of critical infrastructure, autonomously self-replicate, or destabilize governments. What emerged instead was iterative and ordinary: markets creating accountability, institutions adapting, safety practices improving through actual deployment experience.

Has everything been perfect? Of course not. People will use powerful tools toward bad ends, as they do with every useful tool. But those troubling outcomes are evidence of familiar human failures, not systems escaping human control—and they are exactly the kind of problems that can only be surfaced and addressed through use.

The data center proposals now circulating in Congress apply the same logic one level down the stack. If you can’t stop the models directly, stop the power. If you can’t stop the power, stop the permits. The mechanism changes; the logic doesn’t. And the logic has already been tested.

What the pause advocates have never answered is simple: paused compared to what? You cannot design institutions for technology you’ve frozen. You can only design institutions for technology you understand. And you only understand it by building and deploying it.

We already ran the experiment and the catastrophic predictions failed to materialize. Safety improved through deployment, not despite it. The scenario that demanded a pause never arrived and neither did the governance architecture the pause was supposed to make possible.

They’ve been wrong six times over. There’s no reason to start taking them seriously now.

____

ABOUT THE WRITERS

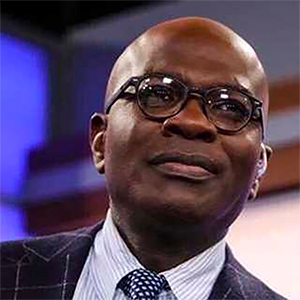

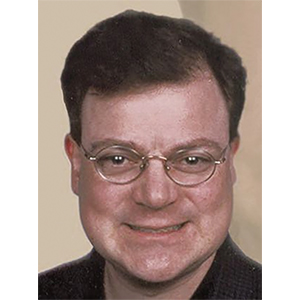

Chris Koopman is the CEO of the Abundance Institute. Keving Frazier is a Senior Fellow with the Abundance Institute and he leads the AI Innovation and Law Program at the University of Texas School of Law.

_____

©2026 Tribune Content Agency, LLC.

Comments