Automotive

/Home & Leisure

Larry Printz: Maserati GranCabrio Folgore: A healthy dose sexy Italian automotive lightning

Ooooh. That’s pretty, isn’t it? It’s the Maserati’s newest EV, the 2025 Maserati GranCabrio Folgore, a pure battery electric, two-door, four-seat convertible, a model in a class of one. It took nearly eight years to arrive from initial conception, but there’s a reason for that.

“We knew we had to create rolling sculpture,” said ...Read more

The first big-rig hydrogen fuel station in the US opens in California

OAKLAND, California — The first commercial hydrogen fuel station for big-rig trucks in the U.S. is up and running at the Port of Oakland, a baby step toward what hydrogen proponents see as a clean new future for long-haul trucking.

The small station, now serving 30 hydrogen fuel-cell trucks, could mark the start of a nationwide network for ...Read more

Auto review: The Genesis G90 is cool just sitting in your driveway

OAKLAND COUNTY, Michigan — Luxury cars are becoming Brookstone gadget stores on wheels. Who needs to drive them? They’re just fun to play with.

Take the 2024 Genesis G90 sitting in my driveway.

With the key in my pocket, I walked up to the Genesis and it rolled out the red carpet. Make that lit carpet. A Genesis logo splashed on the ground...Read more

Auto review: 2024 BMW X6 is nothing short of stunning

The luxury midsize coupe is becoming increasingly popular in the consumer market, and BMW is easily the leader of the pack with its X6. Featured in Grasso's Garage this week is the BMW X6 xDrive40i, adorned in Aventurin Red Metallic — a fiery red/orange metallic hue that is a stunning display of beauty, at least to my eyes.

Equipped with an ...Read more

Electric vehicle 'workforce hub' coming to Michigan, White House says

WASHINGTON — Michigan will be among four new "workforce hubs" designated to help prepare workers for new manufacturing jobs, the White House said Thursday. The Michigan hub will focus specifically on electric vehicles.

The effort — a collaboration between federal and state agencies — is meant to train or retrain workers through ...Read more

Toyota is investing $1.4 billion to build another all-electric SUV in US

Toyota Motor Corp. is moving ahead with plans to manufacture and sell more electric vehicles in the U.S. by investing $1.4 billion at a plant in Indiana, the Japanese carmaker’s second such announcement this year.

The Princeton, Indiana, facility — which currently makes four gas and hybrid models — will add an unnamed all-electric, three...Read more

Ford Q1 profits fall 28% as Ford Blue hit from F-150 ramp-up

Ford Motor Co. said Wednesday it made $1.3 billion in net income in the first quarter of 2024, a 28% decrease year-over-year, as earnings from Ford's gas-engine and hybrid business tumbled from the ramp-up of the refreshed 2024 F-150 pickup truck.

The profit that represented 33 cent earnings per diluted share came on $42.8 billion in revenue, ...Read more

GM's CEO no longer highest paid Detroit auto executive

General Motors Co. CEO Mary Barra is no longer the highest-paid Detroit automotive company chief executive, receiving a 2023 compensation package totaling $27.8 million — trailing Stellantis NV CEO Carlos Tavares' $39.491 million payout.

Barra, 62, saw her pay drop 4% from her 2022 compensation of $28.97 million with a decline in her bonus ...Read more

Tesla soars as Musk's cheaper EVs calm fears over strategy

Tesla Inc. shares surged after Elon Musk vowed to launch less-expensive vehicles as soon as late this year, easing concerns about disappointing earnings results and diminished growth prospects.

The automaker said Tuesday it’s accelerating new models using aspects of a next-generation platform that had been slated for the second half of next ...Read more

GM beats profit expectations in first quarter, increases guidance for 2024

General Motors Co. beat Wall Street expectations on Tuesday, delivering first-quarter net income of $3 billion on revenue of $43 billion.

The Detroit automaker increased its guidance for the year of adjusted earnings to be in a range of $12.5 billion to $14.5 billion, up from $12 billion to $14 billion. The automaker expects its net income for ...Read more

Honda nears deal with Canada to boost electric vehicle capacity

Canada is on the verge of an agreement with Honda Motor Co. that would see the Japanese firm build electric vehicles and their components in the province of Ontario, according to people familiar with the matter.

The deal, expected to be announced within a week, involves a multibillion-dollar commitment by Honda for new facilities to process ...Read more

Elon Musk and Tesla: Is the CEO's controversial behavior responsible for company's struggles?

The richest man in the world says and does what he wants. And often, it’s contentious and provocative.

Tesla CEO Elon Musk has attacked U.S. election integrity, embraced white-supremacist propaganda and accused President Joe Biden of treason. He even smoked pot on Joe Rogan’s provocative podcast.

Today, the pioneering electric-car company ...Read more

'Keeps the momentum': What the UAW's Volkswagen win means for its organizing campaign

The United Auto Workers’ organizing victory at Volkswagen AG's plant in Tennessee is a critical early momentum-builder as the union turns to more auto and battery plants across the South, experts say, but further successes aren't a foregone conclusion.

The Volkswagen landslide in Chattanooga, where 73% of voting workers backed UAW ...Read more

Motormouth: Benefits of a gentle stop?

Q: I have come across motorists whose brake lights come on hundreds of feet before a stop light. Brake lights come on, the vehicle immediately slows and it eventually comes to a complete stop at the light. Strictly in terms of brake wear, does a gentle stop over a long distance and time wear out the brakes less than a firmer stop (no skid marks)...Read more

China's highflying EV industry is going global. Why that has Tesla and other carmakers worried

TAIPEI, Taiwan — The U.S.-China rivalry has a new flashpoint in the battle for technology supremacy: electric cars.

So far, the U.S. is losing.

Last year, China became the world's foremost auto exporter, according to the China Passenger Car Association, surpassing Japan with more than 5 million sales overseas. New energy vehicles accounted ...Read more

Georgia demands Rivian secure, maintain factory site

Electric vehicle maker Rivian said it is working to secure and maintain the site of its planned Georgia factory, as well as preparing the land for vertical construction as soon as it can move forward with the $5 billion project.

Letters exchanged by Rivian and lawyers for the state of Georgia and a local joint development authority show the ...Read more

Tesla co-founder JB Straubel has built an EV battery colossus

In the scrublands of western Nevada, Tesla co-founder JB Straubel stood on a bluff overlooking several acres of neatly stacked packs of used-up lithium-ion batteries, out of place against the puffs of sagebrush dotting the undulating hills. As if on cue, a giant tumbleweed rolled by. It was the last Friday of March, and Straubel had just struck ...Read more

How Ford's most profitable business is defying the EV slump

While malaise sets in across much of the electric vehicle market, there’s a corner that’s still going strong, where buyers like Chris Russo show little concern about high prices or range anxiety or spotty charging infrastructure.

The co-founder of Elite Home Care, a South Carolina-based company, bought his first EV more than two years ago �...Read more

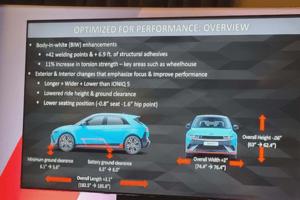

Auto review: Flat out in the Hyundai Ioniq 5 N electric track rat

LAGUNA SECA, California — Out of slow, 90-degree Turn 11 onto the Laguna Seca Raceway’s pit straight, my 2025 Hyundai Ioniq 5 N performance SUV instantly put down 545 pounds of torque and 601 horsepower to all four fat Pirelli P Zero performance tires. No downshift to second gear. No turbo lag. Just pure thrust. Zot! Seconds later, the EV ...Read more

Auto review: 2024 Lexus TX PHEV displays beauty and luxury

You heard it here first! As a Volvo fan myself, I don't see a lot of manufacturers that do it better, as the competition seems to always be chasing Volvo’s design, comfort and pricing. But with Lexus sharpening their pencil, we may have ourselves something here. I give you the 2024 Lexus TX550h+ Luxury.

This six-passenger hybrid SUV takes a ...Read more

Popular Stories

- Electric vehicle 'workforce hub' coming to Michigan, White House says

- Toyota is investing $1.4 billion to build another all-electric SUV in US

- GM's CEO no longer highest paid Detroit auto executive

- Ford Q1 profits fall 28% as Ford Blue hit from F-150 ramp-up

- Tesla soars as Musk's cheaper EVs calm fears over strategy