Politics

/ArcaMax

Editorial: A GOP speaker risks his neck to finally help a desperate Ukraine. He deserves Democratic support

It’s to America’s shame that Ukrainian civilians are dying in Russian missile and drone attacks for lack of defensive weaponry due, at least in part, to the inability so far of the U.S. House of Representatives to approve military aid. Just Wednesday, three Russian missiles hit an eight-story apartment building in the northern Ukrainian city...Read more

COUNTERPOINT: We need less, not more, immigration

We often hear from politicians that they are “against illegal immigration but in favor of legal immigration.”

Although this refrain may sound safely moderate, it actually ducks the critical policy questions related to immigration: Who may come? How many? And how do we enforce the limits we set?

Even as a record level of legal and illegal ...Read more

Commentary: Making sense of the 'wage gap'

What’s behind the wage gap between men and women?

It has narrowed recently. In 2023, women’s median weekly wages of $1,005 equaled 84% of men’s $1,202 in weekly wages. That’s an all-time high, and a distinct uptick from a fairly steady 80% to 82% between 2004 and 2020.

Yet 84% is still not 100%, even though equal pay for equal work has...Read more

Commentary: In Utah, the Capitol really is the people's house

Many state capitol buildings feel unapproachable, tucked away downtown or barricaded behind lanes of noisy traffic. Not so in Salt Lake City. The Utah Capitol sits at the mouth of a verdant canyon, flanked by parks and neighborhoods, perched below the Wasatch Mountains and presiding over the city with authority. It’s a grand building, just ...Read more

Commentary: Civic engagement should not be performed 'All By Myself'

With the death of singer-songwriter Eric Carmen last month and Earth Day coming up, I got to thinking about Carmen’s song “All By Myself” and how deeper forms of activism are both essential to making change and a powerful antidote to our growing epidemic of loneliness.

In a New York Times essay last year, U.S. Surgeon General Vivek Murthy...Read more

Commentary: Don't want Biden or Trump to have so much power? Maybe the US needs a poly-presidency

At the Constitutional Convention in 1787, Pennsylvania delegate James Wilson brought up a seemingly un-American idea. He said the executive branch of America’s government should be headed by a single person: a president.

Several constitutional delegates objected. A single leader at the helm? Virginia delegate Edmund Randolph said this was the...Read more

POINT: Resolving border crisis requires increasing legal migration

Given former President Donald Trump’s rhetoric during the Republican primaries, we can expect immigration enforcement on our southern border to be a major focus of his presidential campaign.

However, any solution to our border difficulties must entail reforms that make legal immigration a more plausible option for would-be migrants.

In a ...Read more

Editorial: It's 76 shots that are hard for today's Chicago to talk about, but that kill a kid just the same

Chicago police recovered 76 casings after the Saturday night massacre in the 2000 block of West 52nd Street in Chicago. They did not recover the life of Ariana Molina, 9.

Nor did they prevent the sprayed bullets from an automatic weapon from wounding two young boys, ages 8 and 9.

Nor could they prevent the shooting of a 1-year-old baby, who ...Read more

Editorial: If 10 straight months of record-breaking heat isn't a climate emergency, what is?

Californians have had weekend after weekend of cool, stormy weather and the Sierra Nevada has been blessed with a healthy snowpack. But the reality is that even the last few months have been more than 2 degrees hotter than average.

The planet is experiencing a horrifying streak of record-breaking heat, with March marking the 10th month in a row...Read more

Francis Wilkinson: Sarah Huckabee Sanders gets her Trump on

After Arkansas Gov. Sarah Huckabee Sanders purchased a very high-priced lectern for her very poor state, the state legislature initiated an audit, which was released Monday. As corruption goes, the report depicts a small-time, dimly comic routine — a state purchase seemingly padded with a generous allowance for a political crony of the ...Read more

Andreas Kluth: Israel exposes the contradictions in Biden's foreign policy

Press conferences can be cringey anywhere, but Monday’s briefing at the U.S. State Department was in a class of its own. For 50 tortured minutes, Matt Miller, the spokesperson, struggled valiantly but haplessly, not so much against the journalists in the room as against the cumulative force of the contradictions in President Joe Biden’s ...Read more

Commentary: Think life just keeps getting worse? Try being nostalgic -- for the present

Nostalgia seems harmless enough, and then someone starts earnestly — absurdly — glamorizing the Stone Age.

“Damn can you imagine being a human during the paleolithic age,” tweeted a self-described “eco-socialist” podcaster in September 2021. “Just eating salmon and berries and storytelling around campfires and stargazing … no ...Read more

Leonard Greene: OJ Simpson ex-teammate says trial showed 'Black man can buy justice like a white man'

If there is anybody with a unique perspective on the O.J. Simpson saga, it’s Boston lawyer Eddie Jenkins.

Not only is Jenkins, a former president of the Massachusetts Black Lawyers Association, well versed on the subject of jury nullification, he had a front row seat to the vanity show as Simpson’s football teammate.

Jenkins has three ...Read more

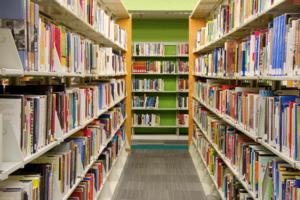

Commentary: Fighting back against library book bans

A bill recently passed by the West Virginia House of Delegates is one of the latest attempts to censor educational materials. If the measure becomes law, it would make librarians and other educators criminally liable for showing obscene materials to children who are not accompanied by an adult. Librarians and teachers could then face felony ...Read more

Editorial: Biden shrugs at inflation

Over the past three years, the Biden White House has gradually passed through the five stages of grief when it comes to inflation. President Joe Biden has now reached the “acceptance” stage. The American public, however, may not be so accommodating.

Denial came first. Recall Biden’s famous assertion in July 2021 that, “There’s nobody ...Read more

Editorial: PT Barnum, suckers and the plummeting stock price of Truth Social

P.T. Barnum may or may not have said, “There’s a sucker born every minute.” He gets the credit in the popular mind.

Whoever was the true originator of that 19th century classic observation likely would get a proper chuckle out of the ways that Donald Trump continues to make saps out of a dismayingly large slice of the American public.

...Read more

Commentary: Councilmember Shahana Hanif ignores Jew-hatred

In 2021, when Shahana Hanif was elected to represent Brooklyn’s 39th Council District, progressives throughout the district were excited and optimistic. A Kensington-born daughter of Bangladeshi immigrants, Hanif was the first Muslim woman elected to the City Council, with a promise and commitment to represent every resident of the district. ...Read more

Editorial: Iowa follows Texas folly: Courts must stop state-level immigration enforcement

Last week, Iowa Gov. Kim Reynolds followed the lead of Texas counterpart and grandstander extraordinaire Greg Abbott in signing a bill that would make immigration violations a state-level crime.

This domino effect is the entirely predictable result of courts that have let this charade go on. In briefly allowing Texas’ measure to go into ...Read more

Trudy Rubin: Why swift Israeli reaction against Iran could lead to disaster

BRUSSELS — Viewing live video footage of Iranian drones whizzing over Jerusalem’s hills on Saturday — marked by small, flying spots of light that exploded into flares when they were hit — was like watching the passage from one Mideast era to another.

The shadow war between Jerusalem and Tehran that has gone on for decades burst into ...Read more

Marc Champion: Iran hawks want to strike now. They're wrong

John Bolton has called for Israel to respond to Iran’s massive, failed weekend missile barrage by destroying its nuclear fuel facilities. In one sense, that’s no surprise; the former US national security adviser has rarely seen a problem he didn’t think could be bombed into submission.

Yet he’s far from alone in believing Tehran’s ...Read more