Automotive

/Home & Leisure

GM beats profit expectations in first quarter, increases guidance for 2024

General Motors Co. beat Wall Street expectations on Tuesday, delivering first-quarter net income of $3 billion on revenue of $43 billion.

The Detroit automaker increased its guidance for the year of adjusted earnings to be in a range of $12.5 billion to $14.5 billion, up from $12 billion to $14 billion. The automaker expects its net income for ...Read more

Honda nears deal with Canada to boost electric vehicle capacity

Canada is on the verge of an agreement with Honda Motor Co. that would see the Japanese firm build electric vehicles and their components in the province of Ontario, according to people familiar with the matter.

The deal, expected to be announced within a week, involves a multibillion-dollar commitment by Honda for new facilities to process ...Read more

Elon Musk and Tesla: Is the CEO's controversial behavior responsible for company's struggles?

The richest man in the world says and does what he wants. And often, it’s contentious and provocative.

Tesla CEO Elon Musk has attacked U.S. election integrity, embraced white-supremacist propaganda and accused President Joe Biden of treason. He even smoked pot on Joe Rogan’s provocative podcast.

Today, the pioneering electric-car company ...Read more

'Keeps the momentum': What the UAW's Volkswagen win means for its organizing campaign

The United Auto Workers’ organizing victory at Volkswagen AG's plant in Tennessee is a critical early momentum-builder as the union turns to more auto and battery plants across the South, experts say, but further successes aren't a foregone conclusion.

The Volkswagen landslide in Chattanooga, where 73% of voting workers backed UAW ...Read more

Motormouth: Benefits of a gentle stop?

Q: I have come across motorists whose brake lights come on hundreds of feet before a stop light. Brake lights come on, the vehicle immediately slows and it eventually comes to a complete stop at the light. Strictly in terms of brake wear, does a gentle stop over a long distance and time wear out the brakes less than a firmer stop (no skid marks)...Read more

China's highflying EV industry is going global. Why that has Tesla and other carmakers worried

TAIPEI, Taiwan — The U.S.-China rivalry has a new flashpoint in the battle for technology supremacy: electric cars.

So far, the U.S. is losing.

Last year, China became the world's foremost auto exporter, according to the China Passenger Car Association, surpassing Japan with more than 5 million sales overseas. New energy vehicles accounted ...Read more

Georgia demands Rivian secure, maintain factory site

Electric vehicle maker Rivian said it is working to secure and maintain the site of its planned Georgia factory, as well as preparing the land for vertical construction as soon as it can move forward with the $5 billion project.

Letters exchanged by Rivian and lawyers for the state of Georgia and a local joint development authority show the ...Read more

Tesla co-founder JB Straubel has built an EV battery colossus

In the scrublands of western Nevada, Tesla co-founder JB Straubel stood on a bluff overlooking several acres of neatly stacked packs of used-up lithium-ion batteries, out of place against the puffs of sagebrush dotting the undulating hills. As if on cue, a giant tumbleweed rolled by. It was the last Friday of March, and Straubel had just struck ...Read more

How Ford's most profitable business is defying the EV slump

While malaise sets in across much of the electric vehicle market, there’s a corner that’s still going strong, where buyers like Chris Russo show little concern about high prices or range anxiety or spotty charging infrastructure.

The co-founder of Elite Home Care, a South Carolina-based company, bought his first EV more than two years ago �...Read more

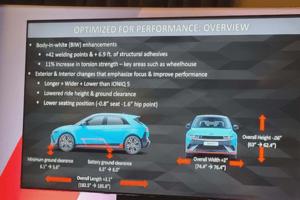

Auto review: Flat out in the Hyundai Ioniq 5 N electric track rat

LAGUNA SECA, California — Out of slow, 90-degree Turn 11 onto the Laguna Seca Raceway’s pit straight, my 2025 Hyundai Ioniq 5 N performance SUV instantly put down 545 pounds of torque and 601 horsepower to all four fat Pirelli P Zero performance tires. No downshift to second gear. No turbo lag. Just pure thrust. Zot! Seconds later, the EV ...Read more

Auto review: 2024 Lexus TX PHEV displays beauty and luxury

You heard it here first! As a Volvo fan myself, I don't see a lot of manufacturers that do it better, as the competition seems to always be chasing Volvo’s design, comfort and pricing. But with Lexus sharpening their pencil, we may have ourselves something here. I give you the 2024 Lexus TX550h+ Luxury.

This six-passenger hybrid SUV takes a ...Read more

Auto review: Two new Toyotas sure to satisfy your soul

Toyota has long been America’s favorite automaker, even if it hasn't always topped the sales charts. Certainly, it’s the world’s largest auto manufacturer, a spot once claimed by General Motors. So, when Toyota introduces two new models, it’s certainly big news. Better yet, along with the news came the chance to briefly experience both ...Read more

'This feels totally different': For 3rd time, VW workers mull joining UAW

CHATTANOOGA, Tennessee — Some are betting they will make history this week as Volkswagen AG workers vote on whether to join the United Auto Workers in this southern auto-producing state, where a right-to-work law is ingrained in its constitution.

Those pushing for unionization at the sprawling plant surrounded by the mountains of East ...Read more

Mercedes-Benz workers to vote on whether to join the UAW in May

Employees at Mercedes-Benz Group's assembly and battery plants outside Tuscaloosa, Alabama, will vote whether to join the United Auto Workers from May 13-17, the National Labor Relations Board said Thursday.

The election will be the Detroit-based union's second at a foreign-owned assembly plant following the launch of its $40 million organizing...Read more

Construction paused at VinFast's NC site as carmaker seeks a smaller footprint

Nearly nine months after VinFast broke ground on its planned $4 billion electric vehicle factory in North Carolina, construction at the Chatham County site has stalled while local officials await updated building plans from the Vietnamese carmaker.

Chatham County confirmed Tuesday what News & Observer drone footage makes clear: No significant ...Read more

Tesla asks investors to approve Musk's $56 billion pay again

Tesla Inc. will ask shareholders to vote again on the same $56 billion compensation package for Chief Executive Officer Elon Musk that was voided by a Delaware court early this year.

In its proxy filing issued Wednesday, Tesla also said it will call a vote on moving the company’s state of incorporation to Texas from Delaware. The carmaker ...Read more

Broken and unreliable EV chargers become a business opportunity for LA's ChargerHelp

Right place, right time, with an eye for opportunity, a commitment to economic growth for all, and a will to get things done. That’s entrepreneur Kameale Terry, co-founder of ChargerHelp, a Los Angeles startup.

She’s tackling a modern problem — the sorry state of electric vehicle public charging stations— while training an often-...Read more

Ford F-150 Lightnings now shipping after February stop order

Ford Motor Co. says it's shipping 2024 F-150 Lightning electric pickup trucks, and the vehicles now are available to order online.

The automaker on Feb. 9 had placed a stop-shipment on the all-electric trucks made at the Rouge Electric Vehicle Center in Dearborn because of an undisclosed issue resulting in the company saying it was extending ...Read more

'Threaten our jobs and values': Southern politicians ramp up campaign against UAW organizing

Political opposition to the United Auto Workers’ southern organizing push is cranking up ahead of a first test of the union’s strength this week at Volkswagen AG’s Tennessee plant, where a worker vote on whether to join the union runs Wednesday to Friday.

A Tuesday joint statement from the Republican governors of Alabama, Tennessee, ...Read more

How the UAW is winning over new plants -- starting with Volkswagen

The United Auto Workers is on the cusp of a significant milestone in its audacious effort to grow by 150,000 people across 13 automakers, including Tesla Inc., BMW AG and Nissan Motor Co.

This week, a Volkswagen AG factory will vote on whether to become the only foreign commercial carmaker unionized in the US. It would also be the first vehicle...Read more

Popular Stories

- Honda nears deal with Canada to boost electric vehicle capacity

- GM beats profit expectations in first quarter, increases guidance for 2024

- 'Keeps the momentum': What the UAW's Volkswagen win means for its organizing campaign

- Henry Payne: Delivering packages in Rivian's Amazon EV truck

- Elon Musk and Tesla: Is the CEO's controversial behavior responsible for company's struggles?