Current News

/ArcaMax

Michigan town's pot bonanza turns into a marijuana melee over corruption claims

MENOMINEE, Mich. — When this small town in the Upper Peninsula was working on a law allowing the sale of marijuana in 2020, it was warned that restricting the number of dispensaries could lead to a legal quagmire.

The town, which is just across the border from Wisconsin, where pot is prohibited, went ahead and limited the number anyway. ...Read more

Denver police and first responders have visited hotel shelters hundreds of times. Are they safer than the street?

DENVER — Mayor Mike Johnston says he raised concerns with the Salvation Army about security at a northeast Denver hotel-turned-homeless shelter the organization runs for the city in early December, on the first day residents moved in.

But for more than three months, those concerns went unaddressed, Johnston told The Denver Post. Then, on ...Read more

States race to restrict deepfake porn as it becomes easier to create

After a 2014 leak of hundreds of celebrities’ intimate photos, Uldouz Wallace learned that she was among the public figures whose images had been stolen and disseminated online.

Wallace, an actress, writer and social media influencer, found out the images were ones her ex had taken without her consent and had threatened to leak.

Over the ...Read more

Ten doctors on FDA panel reviewing Abbott heart device had financial ties with company

When the FDA recently convened a committee of advisers to assess a cardiac device made by Abbott, the agency didn’t disclose that most of them had received payments from the company or conducted research it had funded — information readily available in a federal database.

One member of the FDA advisory committee was linked to hundreds of ...Read more

Georgia election bills seek to satisfy skeptical Republicans

ATLANTA — Conservative election activists got what they wanted from Georgia lawmakers this year, with a series of bills that cater to their demands for heightened scrutiny of ballots and voter registrations.

The bills would grant many wishes of skeptics who now say they’re starting to believe in elections again, nearly four years after ...Read more

California fails to adequately help blind and deaf prisoners, US judge rules

SACRAMENTO, Calif. — Thirty years after prisoners with disabilities sued the state of California and 25 years after a federal court first ordered accommodations, a judge found that state prison and parole officials still are not doing enough to help deaf and blind prisoners — in part because they are not using readily available technology ...Read more

Washington state has passed lots of new gun laws. Could they be in legal trouble?

SEATTLE — When a Cowlitz County judge ruled last week that Washington's ban on high-capacity magazines is unconstitutional, he added one line, on Page 43 of his 55-page opinion, that could just be a little-noticed throwaway, or could prove shockingly prescient.

There are, Judge Gary Bashor wrote, "few, if any, historical analogue laws by ...Read more

City-country mortality gap widens amid persistent holes in rural health care access

In Matthew Roach’s two years as vital statistics manager for the Arizona Department of Health Services, and 10 years previously in its epidemiology program, he has witnessed a trend in mortality rates that has rural health experts worried.

As Roach tracked the health of Arizona residents, the gap between mortality rates of people living in ...Read more

Senate leaders seek quick action on key surveillance authority

WASHINGTON — The House dispensed with a procedural issue Monday on a bill that would renew a powerful surveillance authority for two years, as Senate leaders of both parties stressed the need to reauthorize the program before it lapses on Friday.

Majority Leader Charles E. Schumer, D-N.Y., said Monday the Senate “must finish approving ...Read more

VP Kamala Harris raises abortion issue in Las Vegas

LAS VEGAS — Vice President Kamala Harris praised Nevada for its abortion laws during a Las Vegas visit Monday, just days after Arizona’s state Supreme Court ruled that a near-total abortion ban from 1864 is enforceable.

In her speech, Harris said that women’s reproductive rights are at stake this election.

“This is not about politics, ...Read more

White House issues worker protections for pregnancy termination

WASHINGTON — The Biden administration Monday issued regulations enforcing worker protections for women who have had abortions, miscarriages or fertility issues that require time off work, despite heavy Republican opposition to the move.

The regulation from the Equal Employment Opportunity Commission implements congressional legislation that ...Read more

Henderson police give timeline of multiday police standoff

The Henderson Police Department gave a timeline of the multiday police standoff that ended Sunday with a person found dead.

Police said in a news release Monday that the suspect barricaded inside a home for nearly two days was connected to a violent felony case and was “armed and making suicidal statements, refusing to surrender.”

On ...Read more

Package thief ruins Bay Area bride-to-be's special day

REDWOOD CITY, Calif. — A porch pirate has spoiled one Bay Area bride-to-be’s wedding plans.

On April 11, between 8:24 p.m. and 8:46 p.m., a suspect stole several packages off a doorstep in the 800 block of Adams Street in Redwood City, according to the Redwood City Police Department.

A wedding dress worth $2,000 was inside one of the ...Read more

Berkeley schools chief will testify at congressional hearing over antisemitism charges

As fallout over the Israel-Hamas war grows, the head of the embattled Berkeley public school district is being summoned to Washington, D.C., to testify in front of congressional members amid allegations of antisemitism in her schools.

Berkeley Unified Superintendant Enikia Ford Morthel said Monday that she would travel to the nation's capital ...Read more

US envoy heads to Korean border to keep pressure on Pyongyang

The U.S. ambassador to the United Nations headed to the demilitarized zone dividing the two Koreas, ramping up pressure on North Korea as sanctions enforcement was dealt a heavy blow.

Ambassador Linda Thomas-Greenfield went to the buffer-zone border Tuesday in the highest-profile visit by a Biden administration since Vice President Kamala ...Read more

Kentucky's anti-DEI higher ed bill dies a second time

It’s not often that the the Republican-led Kentucky legislature doesn’t pass a priority piece of legislation addressing a hot-button issue among conservatives.

But that’s just what happened with Senate Bill 6, which was aimed at restricting diversity, equity and inclusion — referred to as “DEI” — offices at public colleges and ...Read more

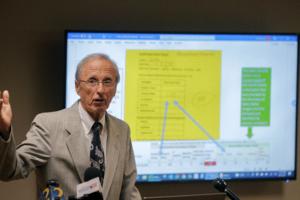

Dr. Werner Spitz, renowned former medical examiner who worked on JFK assassination and other high-profile cases, dies at 97

Dr. Werner Spitz — the renowned former Detroit-area medical examiner who used his expertise in forensic pathology to weigh in on some of the significant cases of the last century, from President John F. Kennedy's death to the Oakland County, Michigan, child murders — was remembered as a pioneer Monday.

His son, Jonathan Spitz, who lives in ...Read more

Bidens paid 23.7% effective federal rate in tax-day disclosure

WASHINGTON — President Joe Biden and first lady Jill Biden paid $146,629 in federal income taxes on a combined $619,976 in adjusted gross income in 2023 — meaning the first family paid an effective federal income tax of 23.7% — according to tax filings released by the White House.

The amount represents a slight uptick in both income and ...Read more

'Laws don't change unless they're challenged': Palm Beach County may try to curb hate speech

FORT LAUDERDALE, Fla. — Presidential election denial. COVID-19 conspiracy theories. Palm Beach County Commissioners perhaps have heard and seen it all.

Still, commissioners’ attention turned to what could be done to curb hate speech on April 2, after a group of people at a meeting spoke one by one at a lectern — making disparaging remarks...Read more

Michigan Gov. Gretchen Whitmer, a millionaire, set up new company before signing financial disclosure bills

LANSING, Michigan — Gov. Gretchen Whitmer’s lawyer filed paperwork to form a company he says is meant to manage her family’s personal wealth, four days after the Michigan Legislature signed off on the hotly debated details of a personal financial disclosure law for state officeholders.

The Democratic governor disclosed her ownership ...Read more

Popular Stories

- FBI boards ship amid investigation into what caused Francis Scott Key Bridge collapse

- Michigan Gov. Gretchen Whitmer, a millionaire, set up new company before signing financial disclosure bills

- Senate leaders seek quick action on key surveillance authority

- OJ Simpson feared he had CTE but his family has said a 'hard no' to brain study

- U.S. Supreme Court allows Idaho's ban on gender-affirming care to go into effect